Labdoor: what this certification actually tells you (and what it doesn’t)

Labdoor operates differently from most supplement certification programs. Where NSF and USP start with the manufacturer: auditing facilities and production processes, reviewing compliance documentation. Labdoor starts with the product on the shelf. It buys supplements at retail, sends them to an FDA-registered laboratory, and publishes the results. The model is closer to consumer reporting than traditional certification, and that distinction matters for understanding what a Labdoor score does and doesn’t mean.

This article covers how Labdoor’s testing works, what the scores actually measure, where the methodology has real limitations, and how it compares to other certification programs. If you’re deciding whether to use Labdoor rankings when choosing supplements, the honest answer is that they’re useful for some things and misleading for others.

This article is part of the Sighed Effects certification series, which also includes guides to NSF Certified for Sport, USP Verified, Informed Choice, BSCG, and our certification comparison guide.

What Labdoor is and how it got started

Labdoor was founded in May 2012 by Neil Thanedar along with co-founders Rafael Ferreira and Helton Souza.1Labdoor. Wikipedia, 2025. https://en.wikipedia.org/wiki/Labdoor It came out of Y Combinator (batch W15) and has raised approximately $11 million from investors including Rock Health, Floodgate, and Mark Cuban.2Y Combinator. Labdoor Company Profile. https://www.ycombinator.com/companies/labdoor The premise was straightforward: dietary supplements in the US don’t require pre-market FDA approval, so consumers have limited ways to verify that what’s on the label matches what’s in the bottle. Labdoor set out to test products and publish the data.

The company buys supplements from retail outlets and online stores, sends them to an FDA-registered laboratory for chemical analysis, and then scores and ranks the products based on the results.3Labdoor. About Us. https://labdoor.com/about Since November 2013, Labdoor’s primary revenue has come from affiliate marketing, when consumers buy products through Labdoor’s site, the company keeps a percentage of each sale. Labdoor also offers paid certification services to manufacturers who want their products tested and, if they pass, the right to use the “Labdoor Certified” badge.4Labdoor. Wikipedia, 2025. https://en.wikipedia.org/wiki/Labdoor

That business model is worth understanding up front. Labdoor is a for-profit company funded by affiliate revenue and venture capital, not a nonprofit standards body like USP or a government-adjacent laboratory like LGC. This doesn’t invalidate its testing, but it does mean the incentive structure is different from certification programs where revenue comes primarily from manufacturer fees tied to compliance audits.

How the testing works

Labdoor’s testing process centers on the finished product. The company purchases supplements the way a consumer would, from store shelves or online retailers, and sends unopened products to an FDA-registered laboratory for analysis. The products aren’t hand-selected by manufacturers, and brands often don’t know they’re being tested until the results go live.

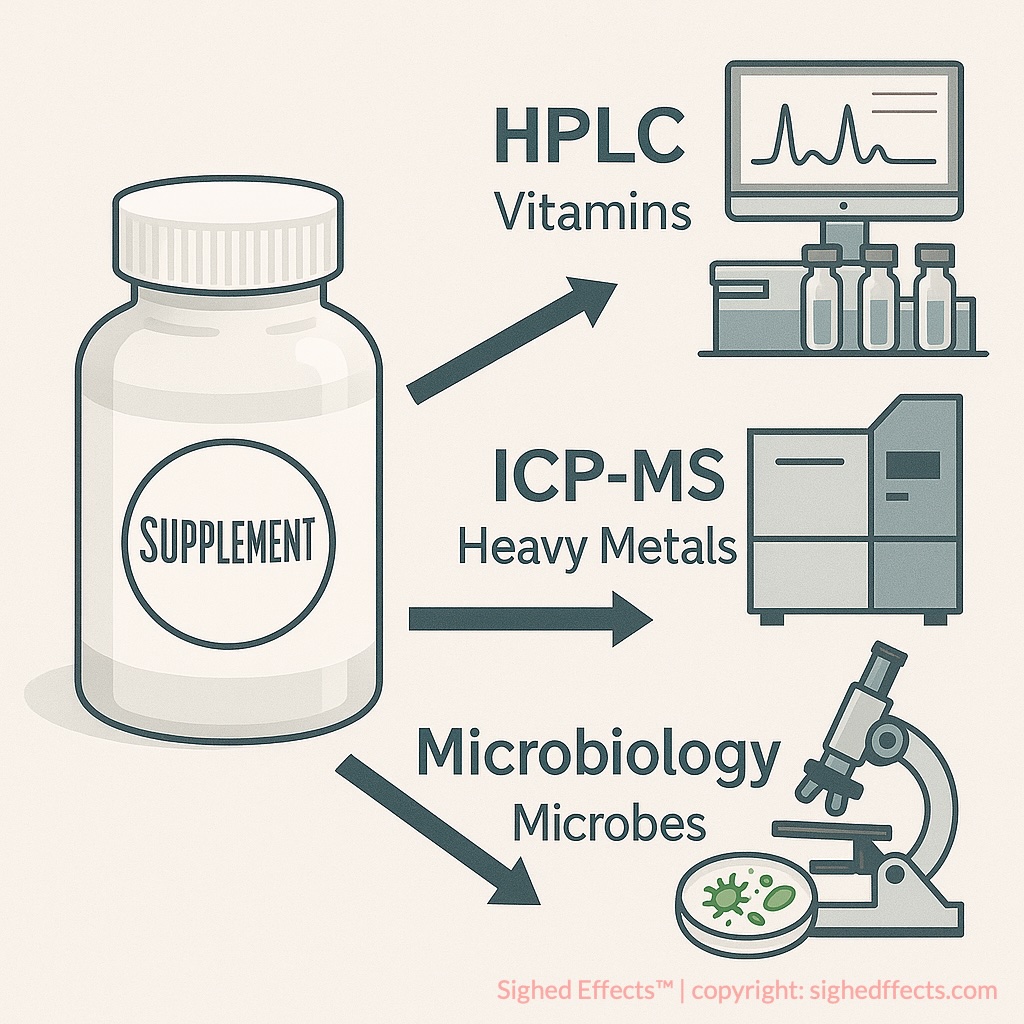

The lab analysis typically includes active ingredient quantification (measuring what’s actually in the product versus label claims), heavy metal screening (arsenic, cadmium, lead, mercury), and in some categories, microbiological testing. The analytical methods used are standard for the field: high-performance liquid chromatography (HPLC) for ingredient identification and quantification, inductively coupled plasma mass spectrometry (ICP-MS) for heavy metals, and gas chromatography-mass spectrometry (GC-MS) where applicable.5Labdoor. Our Scoring Process. https://labdoor.com/about/scores Labdoor follows compendial testing methods (USP, AOAC) rather than developing proprietary protocols.

The results feed into Labdoor’s scoring system, which rates products on a 0-100 scale across five dimensions: label accuracy, product purity, nutritional value, ingredient safety, and projected efficacy. Each dimension contributes to the overall score, though Labdoor does not publicly disclose the specific weighting formula for how these dimensions combine into the final number.6Illuminate Labs. Labdoor Review: Are Their Rankings Accurate? 2025. https://illuminatelabs.org/blogs/health/labdoor-review Products are ranked within their supplement category (protein powders against other protein powders, fish oils against other fish oils), so scores represent relative performance within a peer group.

Labdoor publishes its results for free on its website, including ingredient measurements, detected contaminant levels, and the category ranking. Brands that pass testing can optionally license the “Labdoor Certified” badge for marketing use, but the underlying data goes public regardless of whether the brand participates commercially.

What the scores actually measure

The five scoring dimensions sound comprehensive, but they’re doing different amounts of work depending on the product.

The two dimensions that carry the most concrete information are label accuracy and product purity. Label accuracy is straightforward: the lab measures the actual content of each active ingredient and compares it to the label claim. If a vitamin D supplement says 5,000 IU per serving and the lab finds 4,800 IU, that’s a small deviation. If it finds 3,200 IU, that’s a problem. Labdoor penalizes underdosing more heavily than overdosing.7Labdoor. Our Scoring Process. https://labdoor.com/about/scores Product purity is similarly binary, either the product is clean of heavy metal contamination or it flags for concerning levels of lead, arsenic, cadmium, or mercury.

The remaining three dimensions are more interpretive. Nutritional value factors in the amount of beneficial content per unit cost, which introduces subjectivity since “value” depends on how you weight price against other considerations. Ingredient safety assesses whether dosages approach or exceed established tolerable upper intake levels (ULs), penalizing products as they get closer to safety ceilings. And projected efficacy, the most speculative of the five, estimates whether the product’s dosing aligns with published clinical research on those ingredients. Labdoor is comparing label doses to what clinical trials used, but it’s not measuring absorption, bioavailability in the specific formulation, or actual outcomes in users.

The problem is that the weighting between these dimensions isn’t transparent. A product could score well overall despite significant issues in one dimension if other dimensions pull the average up. This has produced some counterintuitive results.

Where the methodology has real problems

Labdoor’s model has genuine strengths: buying products at retail eliminates the sample-selection problem, and publishing results for free creates public accountability. But the scoring system has drawn legitimate criticism that’s worth understanding before you rely on it.

The most substantive concern involves how the scoring handles label inaccuracy. Illuminate Labs documented a case where a magnesium supplement tested at 263% of its label claim and still received an overall score of 87.1.8Illuminate Labs. Labdoor Review: Are Their Rankings Accurate? 2025. https://illuminatelabs.org/blogs/health/labdoor-review Magnesium overdosing can cause gastrointestinal distress and, at high enough levels, cardiac issues. A scoring system that rates a product well despite delivering nearly three times the stated dose of a mineral with a defined tolerable upper limit raises questions about how the dimensions interact. Labdoor later removed this particular product from its site, but the underlying methodology concern remains.

The same review found a B-complex supplement scoring well despite delivering barely over 50% of stated folic acid and pantothenic acid, and over 300% of claimed vitamin B12. If a score is supposed to tell you whether a product matches its label, these examples suggest the scoring formula can obscure the very information it’s meant to surface.

There’s also the single-batch limitation. Labdoor typically tests one lot of each product. Supplement manufacturing is variable, batches differ, suppliers change, formulations get revised. A score based on one purchase at one point in time doesn’t tell you much about the next batch off the line. Labdoor does retest some products, and it includes testing dates in its reports, but not all products are retested regularly. Some reports are years old. If a supplement was reformulated since Labdoor last tested it, the score is stale.

The retracted CBC testing is also worth noting. In 2015, Labdoor organized third-party testing of supplement products for the Canadian Broadcasting Corporation. The results were initially published and then retracted after repeat testing showed the supplements were correctly labeled with no contamination or deficiency issues.9Labdoor. Wikipedia, 2025. https://en.wikipedia.org/wiki/Labdoor This doesn’t necessarily reflect on Labdoor’s current testing practices, but it is part of the company’s track record.

Finally, the raw test data isn’t always as transparent as Labdoor’s reputation suggests. Several reviews have noted that non-certified products sometimes lack testing dates, detailed methodology disclosures, or full breakdowns of sub-scores. The level of detail varies by product and by whether the brand is paying for the certification program.

How Labdoor compares to NSF, USP, and Informed Choice

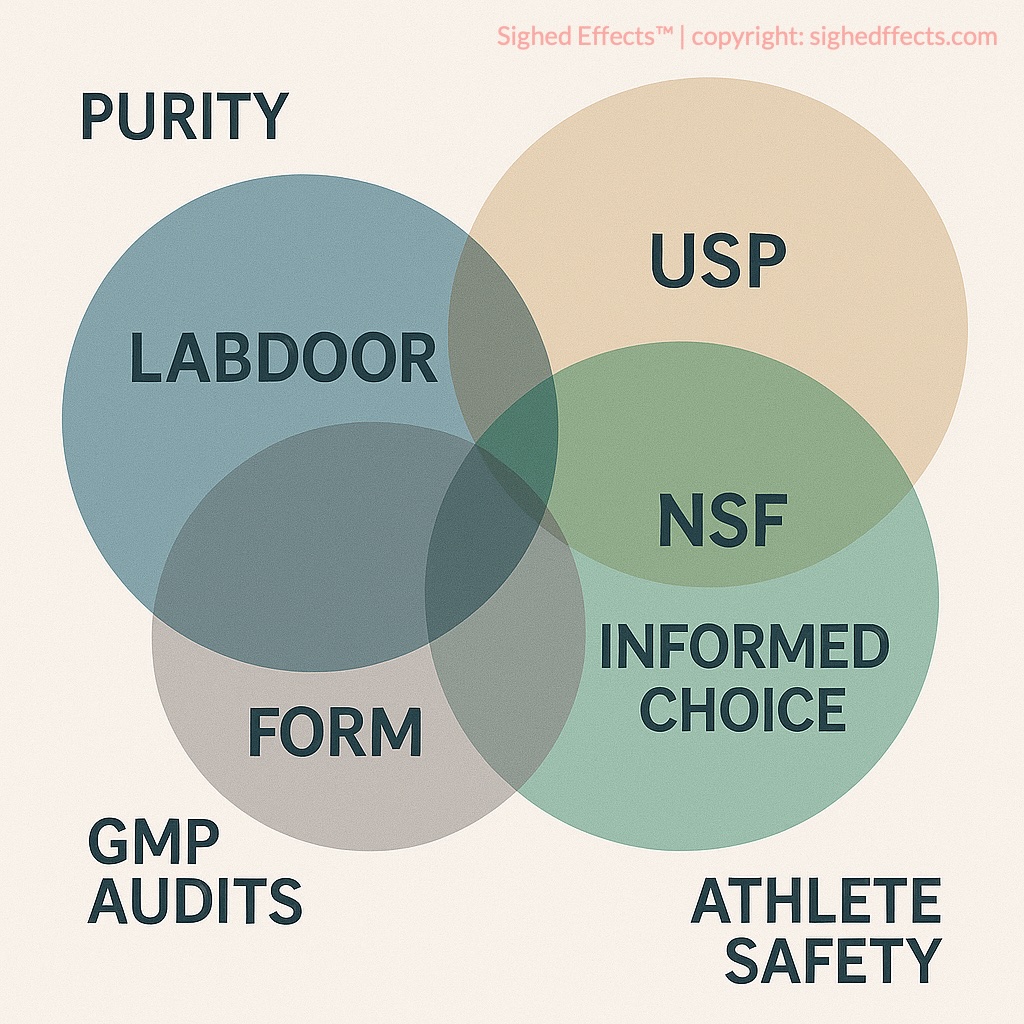

These programs get lumped together as “third-party supplement certifications,” but they’re asking fundamentally different questions.

**NSF** and **USP** are process-oriented. They audit manufacturing facilities, review documentation, verify GMP compliance, and confirm that production systems can consistently deliver what the label promises. USP adds pharmaceutical-grade dissolution and potency testing. NSF Certified for Sport adds banned substance screening. Both require the manufacturer to initiate and pay for the certification process, and both involve ongoing facility audits.

**Informed Choice** is focused specifically on banned substance testing for sports supplements. LGC purchases products from retail (similar to Labdoor’s model) and screens for 285+ prohibited substances using ISO 17025-accredited methods. The scope is narrower than NSF or USP but the analytical depth on banned substances is greater.

**Labdoor** asks: does the finished product on the shelf match its label, and is it free of heavy metal contamination? It doesn’t audit manufacturing facilities, verify GMP compliance, check batch traceability, or screen for banned substances in the way Informed Choice or NSF Certified for Sport do. What it does provide is a consumer-accessible comparison tool: buy a product, test it, publish the results, rank it against competitors.

The practical difference comes down to what you’re trying to verify. If you’re an athlete worried about inadvertent doping, Labdoor won’t help: you need Informed Choice or NSF Certified for Sport. If you want assurance that a manufacturer’s production systems meet pharmaceutical-grade standards, USP is more relevant. If you want to compare five brands of the same supplement based on what independent lab testing found in the bottles, Labdoor is the tool built for that. What none of them can tell you is whether the supplement is worth taking in the first place. That’s a question about evidence, not certification.

What Labdoor is useful for

Despite the scoring methodology issues, Labdoor fills a gap that other certifications don’t cover. For the average consumer comparing supplement options, its rankings offer something rare: actual lab data on whether products deliver what they claim. That data is published for free, organized by category, and updated when retesting occurs.

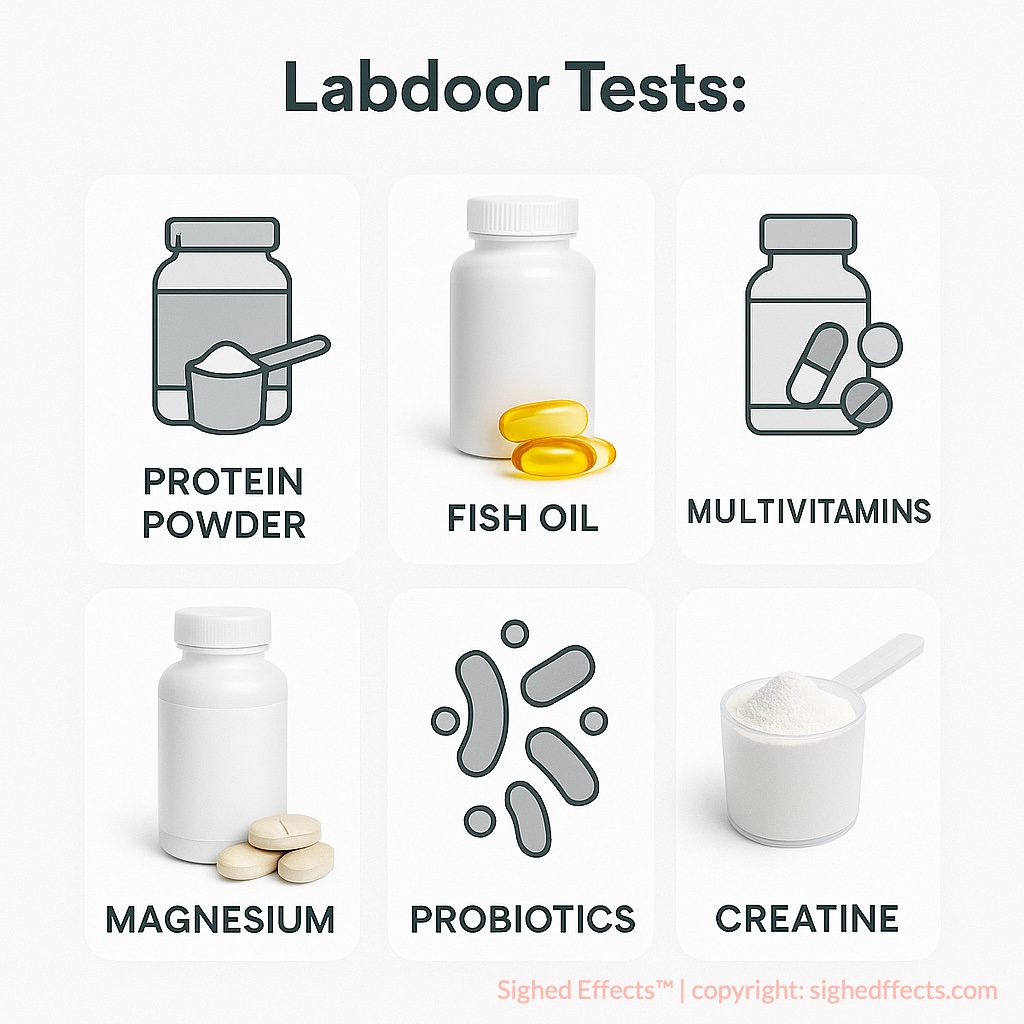

The categories where Labdoor adds the most value are the ones where label fraud is most common. Protein powders are a good example: nitrogen spiking (adding cheap amino acids to inflate protein measurements) is well-documented in the industry, and Labdoor’s testing can catch it. Fish oil supplements are another: oxidation levels, actual EPA/DHA content, and mercury contamination all vary widely between brands, and label claims in this category are notoriously unreliable. Multivitamins, where dozens of ingredients make label accuracy harder to maintain, are also worth checking.

The key is to use Labdoor reports as one input rather than a final verdict. Look at the individual dimension scores, not just the overall number. Check the testing date. If a product scored well on label accuracy and purity but you’re not sure about the “projected efficacy” score, ignore the number you don’t trust and focus on the measurements you care about. The lab data underneath the score is usually more useful than the composite rating.

For categories where Labdoor hasn’t tested a product, or where the test is several years old, the ranking shouldn’t carry much weight. And for anyone in a context where banned substances matter (competitive sports, military service, workplace drug testing), Labdoor is not the right tool. Its screening panel doesn’t include the WADA-prohibited substances that Informed Choice and NSF Certified for Sport cover.

What Labdoor is and isn’t

Labdoor does something valuable: it buys supplements off the shelf and tells you what’s actually in them. In an industry where the default is trusting whatever the manufacturer printed on the label, that’s a meaningful service. The fact that it’s free and publicly accessible makes it more useful to more people than most certification programs.

But the scoring system has real blind spots. The opaque weighting formula can produce ratings that obscure the problems they’re supposed to flag. The single-batch testing model can’t guarantee consistency. And the affiliate revenue model, while not disqualifying, is a different incentive structure than what drives nonprofit standards organizations.

Use Labdoor the way you’d use any consumer comparison tool: as a starting point, with your own judgment applied to the data it provides. The lab measurements are the valuable part. The composite score is a convenience that sometimes misleads. And no quality certification, Labdoor or otherwise, tells you whether the supplement is worth taking in the first place. That question starts with the evidence for the ingredient, not the rating on the bottle.

References

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

This article is part of our Certifications hub: Our deep dives into third-party testing, purity standards, and label verification systems across the supplement industry.